Machine learning model experiments

DETAILS: Tier: Free, Premium, Ultimate Offering: GitLab.com, Self-managed, GitLab Dedicated

- Introduced in GitLab 15.11 as an experiment release with a flag named

ml_experiment_tracking. Disabled by default. To enable the feature, an administrator can enable the feature flag namedml_experiment_tracking.

NOTE: Model experiment tracking is an experimental feature. Refer to https://gitlab.com/gitlab-org/gitlab/-/issues/381660 for feedback and feature requests.

When creating machine learning models, data scientists often experiment with different parameters, configurations, and feature engineering to improve the performance of the model. Keeping track of all this metadata and the associated artifacts so that the data scientist can later replicate the experiment is not trivial. Machine learning experiment tracking enables them to log parameters, metrics, and artifacts directly into GitLab, giving easy access later on.

These features have been proposed:

- Searching experiments.

- Visual comparison of candidates.

- Creating, deleting, and updating candidates through the GitLab UI.

For feature requests, see epic 9341.

What is an experiment?

In a project, an experiment is a collection of comparable model candidates. Experiments can be long-lived (for example, when they represent a use case), or short-lived (results from hyperparameter tuning triggered by a merge request), but usually hold model candidates that have a similar set of parameters measured by the same metrics.

Model candidate

A model candidate is a variation of the training of a machine learning model, that can be eventually promoted to a version of the model.

The goal of a data scientist is to find the model candidate whose parameter values lead to the best model performance, as indicated by the given metrics.

Some example parameters:

- Algorithm (such as linear regression or decision tree).

- Hyperparameters for the algorithm (learning rate, tree depth, number of epochs).

- Features included.

Track new experiments and candidates

Experiment and trials can only be tracked through the MLflow client compatibility. See MLflow client compatibility for more information on how to use GitLab as a backend for the MLflow Client.

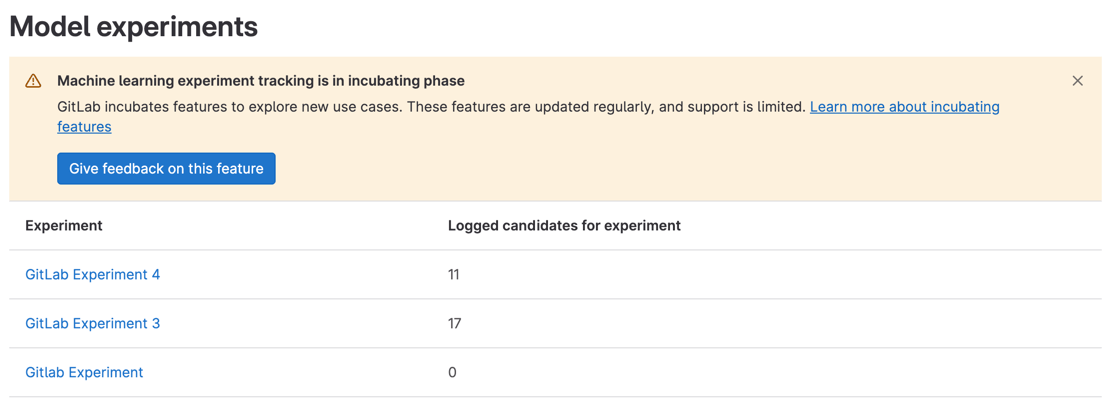

Explore model candidates

To list the current active experiments, either go to https/-/ml/experiments or:

- On the left sidebar, select Search or go to and find your project.

- Select Analyze > Model experiments.

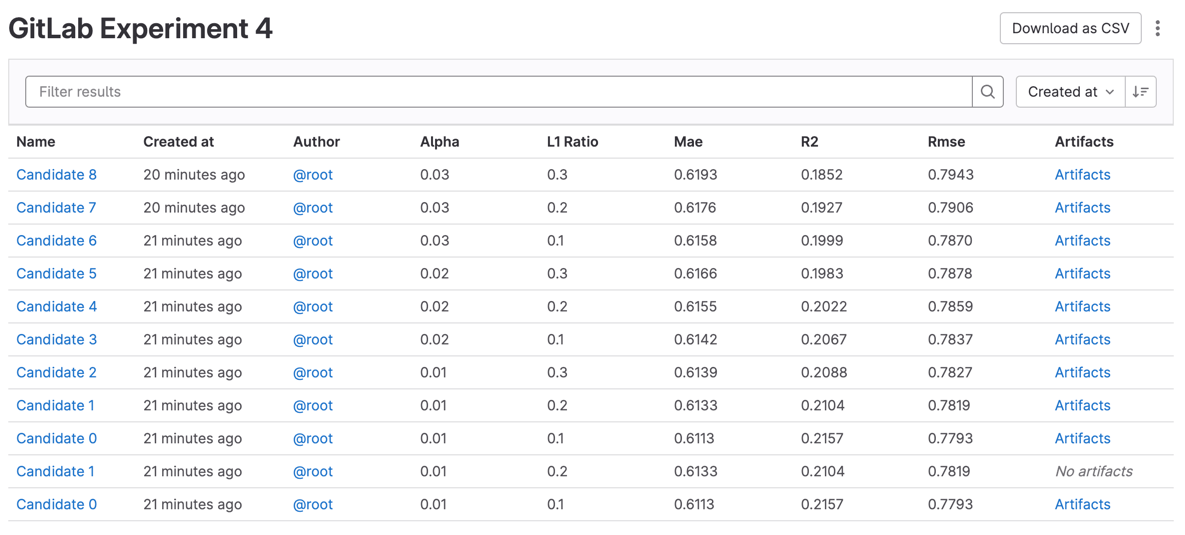

- To display all candidates that have been logged, along with their metrics, parameters, and metadata, select an experiment.

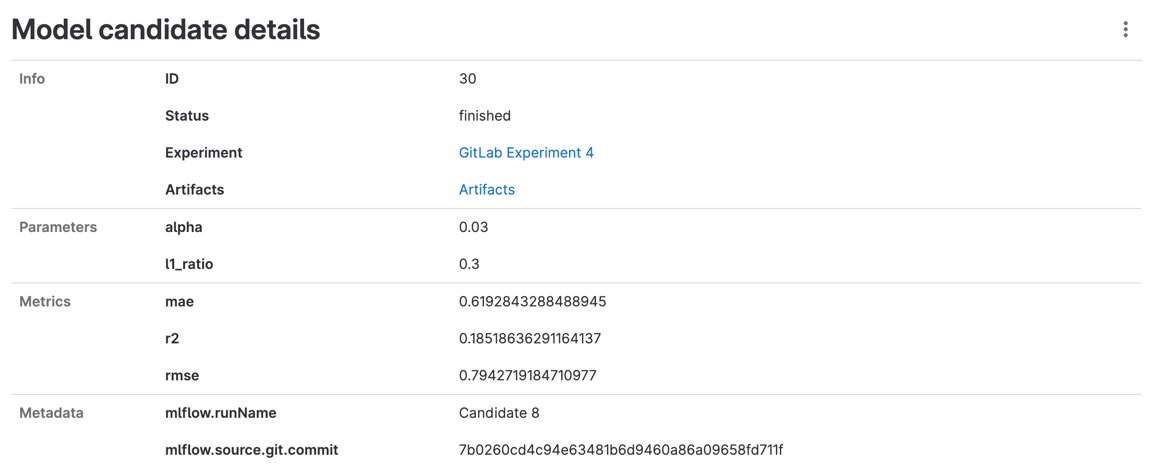

- To display details for a candidate, select Details.

View log artifacts

Trial artifacts are saved as generic packages, and follow all their

limitations. After an artifact is logged for a candidate, all artifacts logged for the candidate are listed in the

package registry. The package name for a candidate is ml_experiment_<experiment_id>, where the version is the candidate

IID. The link to the artifacts can also be accessed from the Experiment Candidates list or Candidate detail.

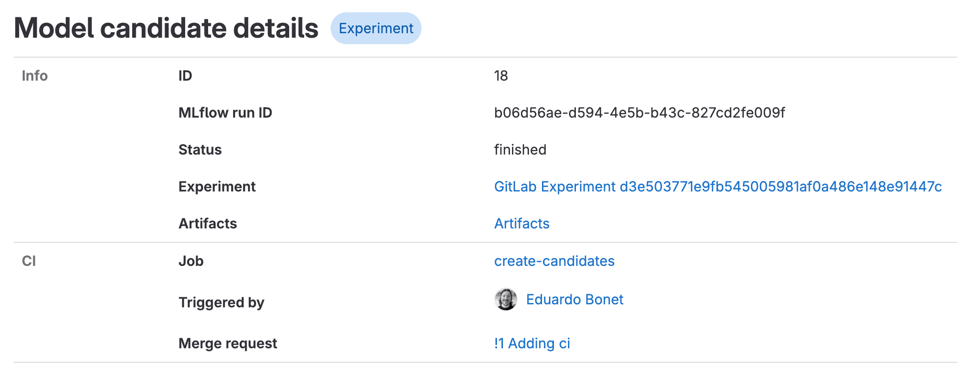

View CI information

- Introduced in GitLab 16.1.

Candidates can be associated to the CI job that created them, allowing quick links to the merge request, pipeline, and user that triggered the pipeline:

Related topics

- Development details in epic 8560.

- Add feedback in issue 381660.